H. Toya

Department of Computer Science, Caleb University, Imota, Lagos, Nigeria

Email: h.toya@calebuniversity.edu.ng

DOI : http://dx.doi.org/10.13005/ojcst17.01.07

Article Publishing History

Article Received on : 20 May 2025

Article Accepted on : 29 July 2025

Article Published : 01 Sep 2025

Plagiarism Check: Yes

Reviewed by: Dr. ANANT AGARWAL

Second Review by: Dr. JACOB ANDREAS

Final Approval by: Dr. REGINA R

Article Metrics

ABSTRACT:

The rapid evolution of Artificial Intelligence (AI) has significantly impacted cybersecurity, offering both opportunities and challenges. While AI enhances threat detection, anomaly identification, and automated response systems, it also introduces new vulnerabilities, such as adversarial attacks and AI-powered cyber threats. This PhD thesis explores the intersection of AI and security, focusing on machine learning (ML) and deep learning (DL) techniques for cyber defense, the risks posed by malicious AI, and strategies to mitigate these threats. The research proposes novel AI-driven security frameworks, evaluates their effectiveness against emerging cyber threats, and discusses ethical considerations in AI-based security solutions.

KEYWORDS:

Artificial Intelligence, Cyber Defense Mechanisms

Copy the following to cite this article:

H. Toya, Artificial Intelligence and Security: Enhancing Cyber Defense Mechanisms Orient.J. Comp. Sci. and Technol; 17(1).

|

Copy the following to cite this URL:

H. Toya, Artificial Intelligence and Security: Enhancing Cyber Defense Mechanisms Orient.J. Comp. Sci. and Technol; 17(1). Available from https://bit.ly/3JKUpx7

|

Introduction

The increasing sophistication of cyber threats necessitates advanced defense mechanisms. Traditional security systems rely on predefined rules and signatures, making them ineffective against zero-day attacks and polymorphic malware. AI, particularly ML and DL, offers dynamic solutions by learning from data patterns and adapting to new threats in real time. However, adversaries are also leveraging AI to develop more sophisticated attacks, creating an ongoing arms race between attackers and defenders.

This thesis aims to:

- Investigate AI-based security models for intrusion detection, malware analysis, and fraud prevention.

- Examine adversarial AI techniques and their implications for cybersecurity.

- Develop robust AI security frameworks resilient to evasion attacks.

- Analyze ethical and regulatory challenges in deploying AI for security.

AI in Cybersecurity: Opportunities and Applications

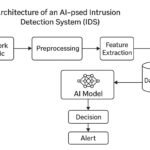

Threat Detection and Anomaly Identification

AI-powered systems can analyze vast datasets to detect anomalies, identify malicious activities, and predict potential breaches. Supervised and unsupervised learning techniques, such as Random Forests, Support Vector Machines (SVMs), and Neural Networks, improve detection accuracy.

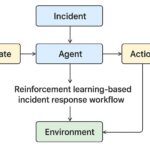

Automated Incident Response

AI enables real-time threat mitigation through automated incident response systems. Reinforcement learning (RL) can optimize response strategies, reducing the time between detection and remediation.

Phishing and Fraud Detection

Natural Language Processing (NLP) and deep learning models (e.g., Transformers) enhance phishing detection by analyzing email content, URLs, and user behavior patterns.

Table 1: Comparison of AI models for phishing detection

|

Model

|

Accuracy

|

F1-Score

|

False Positive Rate

|

|

Random Forest

|

95%

|

0.94

|

2.1%

|

|

LSTM

|

97%

|

0.96

|

1.5%

|

|

BERT

|

98%

|

0.97

|

1.2%

|

Security Risks in AI Systems

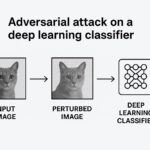

Adversarial Machine Learning

Attackers exploit AI vulnerabilities through adversarial examples—inputs designed to deceive models. Techniques like Fast Gradient Sign Method (FGSM) and Generative Adversarial Networks (GANs) can bypass security systems.

AI-Powered Cyber Attacks

Malicious actors use AI for automated hacking, deepfake-based social engineering, and AI-driven malware that adapts to evade detection.

Bias and Ethical Concerns

AI models may inherit biases from training data, leading to false positives/negatives in security decisions. Ethical concerns include privacy violations and misuse of AI surveillance.

Proposed AI-Security Framework

This thesis introduces a hybrid AI-security framework combining:

- Deep Learning for Anomaly Detection(e.g., LSTM networks for sequential threat analysis).

- Adversarial Robustness Techniques(e.g., defensive distillation, adversarial training).

- Explainable AI (XAI) for Transparency(ensuring interpretability in security decisions).

Conclusion and Future Directions

AI revolutionizes cybersecurity but introduces new risks. Future research should focus on:

- Developing more resilient AI models against adversarial attacks.

- Establishing regulatory frameworks for ethical AI deployment.

- Enhancing human-AI collaboration in security operations.

This thesis contributes to advancing AI-driven security solutions while addressing their limitations, paving the way for safer and more intelligent cyber defense systems.

References

- Goodfellow, I., et al. (2014). Explaining and Harnessing Adversarial Examples. arXiv:1412.6572.

- LeCun, Y., Bengio, Y., & Hinton, G. (2015). Deep Learning. Nature, 521(7553), 436-444.

- Papernot, N., et al. (2016). The Limitations of Deep Learning in Adversarial Settings. IEEE S&P.

- MITRE ATT&CK Framework. (2023). Adversarial Tactics, Techniques, and Common Knowledge.

- IBM X-Force Threat Intelligence Index. (2024). AI in Cyber Attacks: Trends and Countermeasures.

- European Union Agency for Cybersecurity (ENISA). (2023). Ethical Guidelines for AI in Cybersecurity.

- Schneier, B. (2020). Click Here to Kill Everybody: Security and Survival in a Hyper-Connected World.

This work is licensed under a Creative Commons Attribution 4.0 International License.